Lightroom's New Culling Features

Who are these features for?

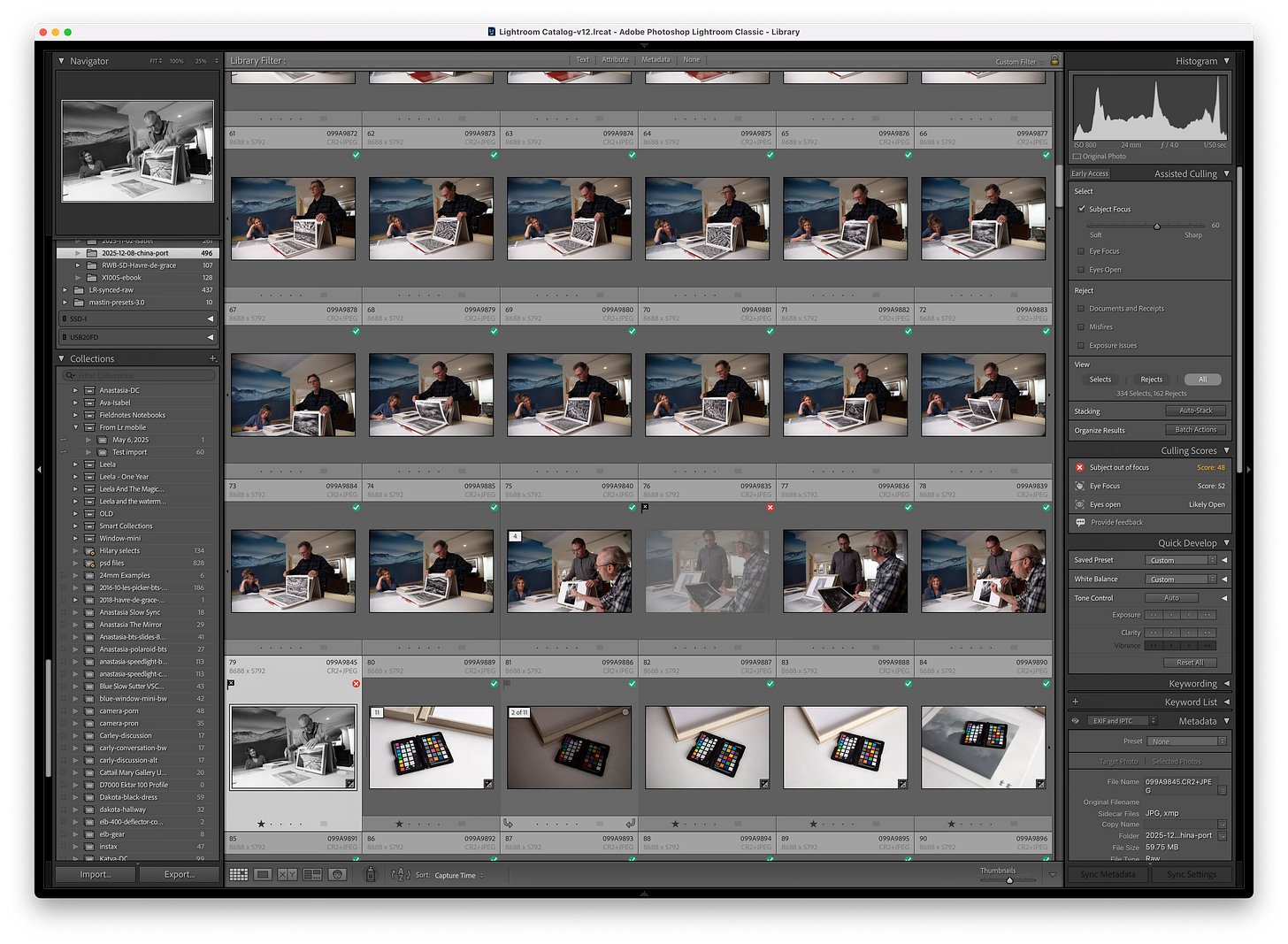

It’s fairly obvious these new features are built specifically for event photographers first and possibly portrait photographers. My bigger question is how useful might they be for photographers or projects that are not “people” focused. Before I attempt to summarize my findings, some readers might be asking; “what new culling features?”. If you’ve not seen them, forgot about them, or ignored them since they were introduced, you can see them by going to the Library menu and turning them on by choosing Assisted Culling…

By doing this you’ll see a few new sections over in the right-hand panel when in the Library module. These will be Assisted Culling and Culling Scores. The Assisted Culling section is where all the action is to be found. The Culling Scores is merely an explanation of how an individual image was scored in various categories that this new feature evaluates. I guess that’s okay but your own judgement is far better.

This is my first go-around with these new features ostensibly labeled “Early Access” much like other new features have showed up in the year or two so I would expect that they are subject to change in the near term. I promised a follow-up for both Lightroom and Capture One in the last newsletter. I don’t have any sort of best practices or even a solid opinion on how helpful they might be yet. The two take-aways that I can give you so far are that they might help you and they definitely can and do work for non-people oriented projects.

Here’s the scenario of my initial testing which was completely arbitrary but happened to have a folder containing a couple dozen “people” shots and a few hundred non-people shots. You must choose at least one of the criteria of subject focus, eye focus, and eyes open.

I chose “subject focus” as the only one really relevant to the vast majority of the folder’s content. I wanted to see how it might determine what the subject was if there were no people. I settled on a value of 60 for subject focus.

I used the subject focus slider to scrub around in combination with the auto stack functions set to “similar” only (you can also choose time) and then used the sliders to visually see what was being rejected and what was being stacked.

Finally I used the “Batch Actions” to set metadata of a “rejected flag” on all of the images that were rejected that I used just to test that feature. There are a lot of different things you can do to with batch actions and it’s well worth exploring. In this case I used the rejected flag to quickly eyeball the results of messing around with the subject focus slider while retaining results of my initial settings using the flag.

I didn’t really need the new culling features as I’d already used my own culling method and had the images divided into groups of will use, can use, might use, and won’t use represented by 3-star, 2-star, 1-star, and unrated. I was most interested in 3-star images that were rejected by the culling feature and no star images that were selected. Sort of the opposites of what I would pick vs. what was promoted by the culling parameters.

So what did I learn? First and foremost is that the auto-stacking “Stack by Visual Similarity” can be very useful playing with the slider for everyone no matter if you photograph events, people, or anything else. It’s especially useful due to the ability to do that independently of time shot. Putting visually similar images together can really aid a lot of culling no matter if you do it via back-and-forth or use the compare view. This can save a lot of time and it seems to work reasonably well on just about any subject.

A few other things I learned given I shoot a lot of “people” focused shots and how images are rejected if there are no people.

Be very very careful as all of the criteria that culling will use has no idea what you are trying to do. Intentional motion blur? Gone for the most part. As someone that does this a lot… yes, that’s not helpful.

People in the background where you intentionally focus on some other thing, gone. Even event photographers do this with say a wedding cake in the foreground, focus on the knife with the couple in the background. Be careful…

There does not seem to be a way to individually “un-reject” a photo which might be helpful as in the case above. Yes there are work-arounds such as using a star rating or label or flag to work around this but it certainly makes the baked in “View Selects” a whole lot less useful. In fact it makes “View Rejects” more useful so that you can flag or rate rejects you want to retain. It’s just a lot slower than my proposed feature.

Shallow depth of field seems to always account for less of a “subject in focus” score. In fact there are many cases where a shallow DOF causes a reject because when there are no people Lightroom fails to identify the intentionally really sharp area as a subject.

There are many cases with non-people photos are rejected and other very similar photos with similar sharpness and composition are not. In fact examining these very similar photos and where sharp focus lands, I have no clue why one was “selected” and the other “rejected”. I won’t bother showing you the many examples of this but you’d have an impossible time explaining it as well. Why? Because every time you think you might know, there’s always a counter shot that debunks the theory.

Bottom line, these features can be useful to many photographers no matter what genre even in their current form but be very careful to look through those rejected and develop an override mechanism so you don’t lose some of your favorites. I hope to see a bit more control over the parameters and some features that are focused on other genres.

If you’ve explored these new culling features we’d love to hear your thoughts. As always thanks go out to all of our readers, especially those that help us keep the lights on with paid subscriptions.

Workshop Update

We’ve opened a new date (May 2, 2026) for our most popular workshop — Intro To Fine Art Printing. This one-day workshop fills up quick as we strictly limit attendance to four participants. You can find more information and sign-up over at Les’ website.

Being unable to manually unreject seems almost fatal to me. Given that you need to employ work arounds, how much of a time benefit are you gaining here in your applications?

An AI tool trained on an individual's workflow (a history thereof would be ideal) could actually be an appropriate and good use of AI. It would essentially extend one's existent cognitive processes through pattern finding rather than what's common in AI where it pushes a kind of population norm. Someone must be doing this, if only through the apparent throwing shit at the wall and seeing what sticks approach that's currently de rigueur.